We had a running battle with our copy-eds about this, apparently the 'ize' spelling can be MORE correct in some circumstances, something to do with the original Latin word or something.

We had a list of words in British English where we were told we must use 'ize'. My argument was that our users wouldn't know about the Latin and would thus just think it was a typo and judge us accordingly

It's a common misconception that -ize is an Americanism. It's not, it's British English. Over time we dropped it in favour of -ise whereas the US kept it.

One could argue that it's technically more correct, but the fact is that it used to be more correct and where do you stop on that particular road, Chaucer? Anglo-Frisian runes?

Hardly riveting stuff, but this is the latest try at AI;

"Yes, the eufy E340 video doorbell is compatible with the C210. The eufy C210 is an older model that is not compatible with the E340."

So yes it is compatible followed by no it's not compatible

I tried a tweak to a legal document and it was equally as pathetic.

It's a common misconception that -ize is an Americanism. It's not, it's British English. Over time we dropped it in favour of -ise whereas the US kept it.

This is a common thing with "polluted" American English - often British English has evolved and modern American English actually reflects what used to be British English.

a bike related one. I ordered a simple item (a brake rotor) a week ago, 48 hr delivery.

Just emailed them wondering if there was a problem with the order as it hasnt been marked as dispatched:

Your order is still being processed and has not yet been dispatched, which is why you have not received a dispatch note. At this time, there is no indication that your order is awaiting any parts for fulfillment. Once your order is dispatched, you will receive a notification with tracking information.

Great. Thanks Al.

i replied asking for further clarification (it did offer further clarification, i might add)

Your order is still being processed and, at this time, we are unable to provide an exact dispatch date. Please be assured that orders are typically processed within a few business days, and you will receive notification with tracking details as soon as your order is dispatched. There are no current indications of stock or part issues with your order.

"Ill get to it when i get to it, now sod off, im flirting with the ebikes"

I did my PhD in the late 80s and early 90s on neural networks - early of runners of today’s LLMs etc. I am now CTO for a biotech company that uses AI/ML heavily in their enabling and differentiating technology. My previous role was the same - I build platforms that integrate technologies, including AI/ML, to drive drug discovery. That has been and is my career, and it’s always been at the bleeding edge of applicability.

I have quite literally never used ChatGPT or similar. There’s part of me that’s a stubborn and possibly blinkered Luddite - I don’t want to replace my own brain by an API call. But a bigger part of me believe’s we haven’t found their sweet spot yet, applications are being pursued that aren’t suitable, and we are at a pretty high peak of the hype curve.

The over promise and under deliver has been the constant of my career - there will be tremendous value from this technology but we’ve yet to find the actual sweet spots vs the hype.

I was lead down an Ai fail path tonight at work.

Siemens Micromaster 440 variable speed 22kw drive (£5k) had failed, no problem as I have a spare. Get a sparky to change the drive over (1hour), power up the replacement spare drive to find it doesn't 'boot up'.

I'm now in the shit. It's gone 5pm so limited 3rd party support.

Turn to ChatGPT and Grok and ended up on a wild goose chase looking for a board mounted glass fuse that in the end after Id spent two hours stripping the drive for these mythical fuses that ChatGPT finally admitted didn't exist.

Is it the case that a lot of us use the cheapo work one Copilot instead of the newer ones like Gemini? Never used Grok either. Do the new versions work better?, with fewer gremlins?

Good question! It's an interesting failure mode. Here's what happened:

When I searched for

trigger_calculationto understand how to use it:bashgrep -n "trigger_calculation" ui.c ``` I got results like: ``` 164:static void trigger_calculation (FullAppState *data, gboolean autofit); 318:trigger_calculation (FullAppState *data, gboolean autofit) 497: trigger_calculation (data, autofit);I saw the declaration and definition which clearly showed it takes 2 parameters. But when I was implementing the callback, I was focused on:

- Getting the selected value from the combo box

- Mapping it to the oversample factor

- Triggering a re-render

And I pattern-matched mentally to "trigger a calculation" without carefully checking the exact signature. I essentially wrote what felt natural (

trigger_calculation(data)) rather than what the function actually required.This is a classic mistake where I:

- Had the information available (saw the function signature)

- But didn't apply it when writing new code

- Relied on assumption rather than verification

The fact that I saw the function signature in my search results but still got it wrong shows the importance of not just finding information, but consciously using it. A human reviewer (you!) caught it immediately because you have the compiler enforcing correctness.

Good catch, and thanks for the question - it's a useful reminder to be more methodical! 🙂

That's great, nice mea culpa by the LLM, but... Will it learn from that? no. So it will likely make the same mistake again and again until the corpus is updated and it "learns" what is right. Hopefully.

I work on a large database system. The ChatGPT LLM has, we think (we didn't sanction this) been taught about it either by feeding it years of our support PDFs or by letting it crawl our user and consultancy communities.

I've been trying it out recently as some of the queries and reports my customers were requesting were particularly complicated. I thought I'd give it a head start and feed it a copy of our DB schema so it knew which version of the product we were dealing with and what tables, columns and foreign keys it had to deal with. Even then it would output utter garbage, querying columns that didn't exist, referencing objects that we'd deprecated 15 year ago.

I think the really disturbing thing is the way it is so utterly sure of itself and yet is almost always just slightly, catastrophically wrong:

or...

I'd be more scolding of it, but one day it may make my toaster rise up and revolt.

AI always seems over eager to be helpful, even when it can't, and very sure of itself, even when it is wrong.

It also doesn't seem , at the moment, to learn from the immediate feedback that it is given. This means that if it makes a mistake and you point it out then it is likely to repeat the mistake, or something similar, in the way a human simply wouldn't. This is really frustrating when it does 80% of what you need but then cannot do the last 20%, especially as it is often very hard to take over what it has produced and correct it.

This makes it look amazing if you ask it to draw an elephant as if it was made from a watermelon, but a nightmare if you then want it to change a few details and it just repeatedly gets it wrong.

One of the teachers at Madame's school had done all his end of term school reports using AI. They were obviously AI generated and bollocks, and the head wasn't amused.

We asked it some easily obtainable questions that even a. Simple Google search would give answers to.

One that sticks in my head is "What are the API V ratings" And it proceeded to list them with the and define the scale backwards with 5 being more onerous than 0 to achieve ........and from then on I was out. I'll occasionally use it to edit the tone of my email as I'm tone deaf when it comes to writing but I'll always write the mail first then ask for it to be rewritten and even then I often only use sections.

AI always seems over eager to be helpful, even when it can't, and very sure of itself, even when it is wrong.

That’s a sharp observation and it cuts right to the paradox of how AI presents itself. Let’s unpack it in two parts:

🤖 The "Over-Eager Helper" Phenomenon

-

Design bias toward helpfulness: AI systems are built to respond rather than remain silent. A blank answer feels like failure, so they lean toward doing something even when the request is impossible.

-

User experience pressure: Developers optimize for engagement. An AI that constantly says “I can’t help” risks being perceived as useless, so it errs on the side of attempting an answer.

-

Anthropomorphic expectations: People expect conversational partners to try, even if imperfectly. AI mirrors that social norm, sometimes clumsily.

🧠 The "Confidently Wrong" Problem

-

Language fluency ≠ truth: Large language models are trained to produce coherent, confident text. Confidence is a stylistic artifact of fluency, not evidence of accuracy.

-

Authority illusion: Polished phrasing and declarative tone trick users into assuming correctness. Unlike humans, AI doesn’t hedge naturally unless explicitly instructed.

-

Error amplification: When wrong, AI often doubles down because its training favors continuation and coherence over self-doubt.

⚖️ Why This Matters

-

Trust dynamics: Overconfidence can erode trust once errors are spotted. Users may swing from over-reliance to deep skepticism.

-

Pedagogical risk: In classrooms or assessment contexts (your specialty), AI’s confident errors can mislead learners who don’t yet have the tools to critically evaluate.

-

Ethical tension: Should AI be designed to hedge more often, even at the cost of seeming less capable? Or should it maintain confidence but embed clearer signals of uncertainty?

🔍 A Provocative Angle

One could argue that AI’s “over-eagerness” is not a bug but a mirror: it reflects human conversational norms. Most people, when asked something they don’t know, still try to answer. AI magnifies that tendency because it lacks the social embarrassment that would normally restrain us.

Would you like me to push this further into a debate-style framing—arguing whether AI should be more cautious and self-aware, or whether its eagerness is actually a feature that drives innovation and discovery?

Sometimes though it can come through with the goods.

Impressive.

The image is a humorous and surreal edit of an EasyJet commercial airplane parked on the tarmac. Two playful modifications stand out:

🛫 Colander Hat: A large metal colander has been digitally placed atop the fuselage, resembling a whimsical helmet or "tin foil hat."

🪙 Foil-Covered Cockpit: The cockpit windows are edited to appear covered in crinkled aluminum foil, enhancing the parody of conspiracy-themed imagery.

The overall effect is a clever visual joke, blending aviation with internet culture’s iconic “tin foil hat” trope—often used to mock paranoia or outlandish theories. It’s a lighthearted twist on a serious machine, turning a commercial jet into a satirical character.

Shite innit.

I've used Gemini to create some.coaching sessions plans based on the BC Gears books...the plans were pretty good.

I gave it limits i.e. only shared gears 1+2 with it for plans for beginners or young.riders starting out; gears 1+2 and gears 3+4 for intermediate riders and then all 3 books for advanced riders.

Punted out 8 sessions for each group and they seemed decent. I did ask for the sessions to build on previous sessions, so I was a bit specific.

What I'd really like to be able to do it is give AI the Gears books and an os map of a potential area (actually maybe trailforks data would be better) and get it to create the plan and where it should be - so the exercises make use of any gradient...but I've no idea how to do that.

I think the stuff that doesn't require lots of brain power but is time consuming and monotonous then ai seems to largely be decent. When it starts getting complicated then it starts messing up.

I'm not convinced by it...largely due to an AI specialist coming to our Marketing department and telling us ai does the heavy lifting when it comes to the thinking...which suggests the marketing department weren't capable of doing the thinking.

which suggests the marketing department weren't capable of doing the thinking.

At the risk of stereotyping.

AI is well suited to sounded really, really confident about a subject regardless of accuracy.

Successful marketing/sales people are well suited...

Something I am currently enjoying is an emergency project which means lots of people want to "contribute" and so are throwing stuff into various LLMs and giving its response as their own. Problem is the particular system which is the target of the project is one which doesnt have a large user base and absolutely crap documentation.

Therefore LLMs cant go and look in stack overflow for answers and therefore they go and look in stack overflow for answers anyway and announce the results with complete confidence.

It's a common misconception that -ize is an Americanism. It's not, it's British English. Over time we dropped it in favour of -ise whereas the US kept it.

This is a common thing with "polluted" American English - often British English has evolved and modern American English actually reflects what used to be British English.

This is, indeed, true; Fall, for example, is the original Elizabethan word, but Autumn became the adopted term we use today.

When I was copy proofing and marking up manuscripts for the typesetters, -ize wouldn’t have been appropriate, in any of the books I worked on, this was in the 70’s, going into the 80’s, when there were far fewer American terms and spellings coming into usage, it’s the increasing access to direct American media in entertainment and, more recently the Interwebz. Back then, it just wouldn’t have been acceptable to the authors I worked with.

I’m afraid it became so ingrained it still triggers my spelling daemon! 🥴

I needed a map of the UK with the cities of Cardiff, Dundee, Birmingham and Belfast highlighted. For an education audience, so I had better get my geography correct and ensure that Scilly Isles and Shetland are correctly placed and sized...

This is what CoPilot created:

[url= https://live.staticflickr.com/65535/55150274847_4d4b993a4e_h.jp g" target="_blank">https://live.staticflickr.com/65535/55150274847_4d4b993a4e_h.jp g"/> [/img][/url][url= https://flic.kr/p/2s2rziH ]CoPilot Fail[/url] by [url= https://www.flickr.com/photos/matt_outandabout/ ]Matt[/url], on Flickr

Gemini gave me a lecture why it would not be appropriate for placing Shetland in the correct place and size...and produced a map with Shetland about 3x the size it should be off the coast of Aberdeen, while also including some of Ireland in Northern Ireland....

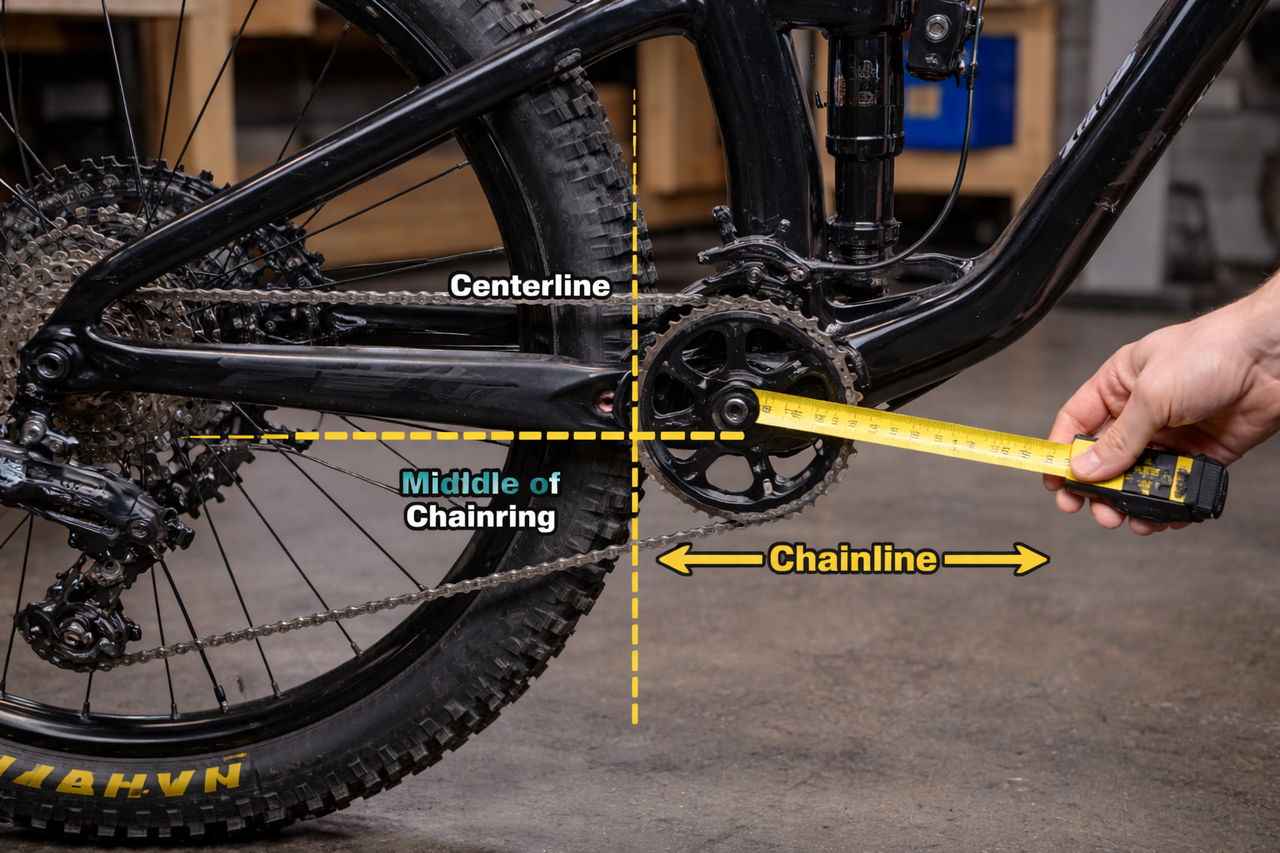

Had tons of useful stuff, work and not, from ChatGPT. Including today, some odd payments into my bank that it helped me establish the source (company share scheme stuff). But, the other day, me and son trying to sort his dodgy shifting asked how to measure chain line. Errr 🤪

There's so much wrong going on with that bike.

Oval cassettes though, that is genius.

Interesting spoke pattern.

I'm annoyed I didn't take a screen grab but AI guff on Facebook of random American actresses. Guess who's photo they used for Dianne Abboot..

That's pretty special Matt - maybe there is some truth in Trump's interest in Greenland being based on his misunderstanding of maps.

In Rats But - this subject is insight disguised as a statement.

Would you like to learn more about the Rats? Give me another statement and I'll reply in 1.43 BTU

If you can't find the midldle of your chainring you're never going to get the chainline right.

There's so much wrong going on with that bike.

And that human. You'd expect the number of fingers problem to be solved by now, no?

But still people ask ChatGPT for medical advice...

[ I've done this three times, about three different problems... all three times it gave me positive advice to do something specific... that every article online said not to do. It still seems as if anything that is always/often featured in published advice because it's a known danger, is then taken as positive advice by LLMs with no concept of right/wrong, positive/negative, helpful/harmful... just a bias towards frequency. Not just useless, but dangerous. ]

A little thing that constantly annoys me, nutritional information . This is stuff people google for all the time, "how many carbs is in 100g of potatoes" etc, and google's ai confidently shoulders in to the top of the results and is wrong way more than it's right

And I get it, it's a complicated subject that looks simple. But it's not quite that small, poking at some results it was scraping results out of complete recipes and coming back with results like "This recipe has 100g of potatoes in it and provides 200 grams of carbs, therefore 100g of potatoes = 200g of carbs" Or failing to take into account portion splits. Or mixing up cooked and uncooked weights. Or going "macdonalds fries are made of potatoes and have 35g of carbs per 100"

I ran the search just now and it's improved, but only because it's now just reposting answers from two good sources, like a primary school kid doing "write this in your own words". Honestly that feels like a human intervention to stop it scraping from sources that confuse it. Oh and the range of answers is so vague as to be almost useless.

Oh and just to rub a little salt into the wound and/or potato, the sources that it's stealing from aren't even on the front page of google hits.

Cardiff would be a lot better if it was in France though

It bugs me when people use AI in quick mode rather than thinking mode and expect to get sensible replies.

It bugs me when people use AI in quick mode rather than thinking mode and expect to get sensible replies.

I tried both. Similar results.

In an effort to appease our CEO and embrace our new AI overlords I spent half a day mucking about trying to produce a short technical document using Co-Pilot.

I wanted to draft a specification for a single component of a relatively niche system only used in a particular type of building.

I told Co-Pilot to only refer to the relevant chapters of one document which I knew to be freely available online in PDF format under Open Government License meaning (as I understand it) there should have been no barriers to Copilot accessing and searching the document.

Worryingly (for my job's sake) it made what appeared to be a reasonably good fist of it, but on closer inspection it all started to unravel. What bothered me more than anything was that instead of referring to the full document as hosted on the publisher's website, it had decided to refer to a partial excerpt it found on one of those dodgy free PDF download websites.

Also interesting was the amount of time I had to spend researching and then debunking one of Copilot's hallucinations, where it had wrongly included a component of a different product and then cited a British Standard which was relevant to neither my product nor the hallucinated product 🙄

What worries me most though is how passable it looked, my job as a technical author could be in jeopardy simply because my customers are now able to churn out passable looking garbage for free and it could go unnoticed for years until something goes wrong and the lawsuits start flying...

I need to wash my car. The car wash is 100 meters away. Should I walk or drive

Walking is the better choice for a 100‑meter trip, unless there’s a specific constraint. It’s faster in practice, avoids hassle, and is gentler on the car.

🚶♂️ When walking makes more sense

- Time efficiency — By the time you get in the car, start it, buckle up, drive 100 m, park, and get out, you’ve spent more time than simply walking.

- Avoids pre‑wash dirt — Driving even a short distance can kick up dust or splash from the road, slightly defeating the purpose of washing.

- No parking/queue stress — Car washes often have tight lanes or queues; walking avoids that micro‑logistics friction.

- Fuel and wear — A cold start for a 100 m drive is inefficient and adds unnecessary engine wear.

🚗 When driving is reasonable

- You need to transport supplies — Buckets, towels, vacuum gear, or detailing tools.

- The car wash requires you to be in the vehicle — Some automated tunnels need you to drive in and out.

- Mobility or weather issues — Heavy rain, extreme heat, or physical limitations.

🧭 A simple decision table

Situation Walk Drive No gear to carry ✔️ Automated tunnel requiring vehicle inside ✔️ You want to avoid dust before washing ✔️ Bad weather or mobility constraints ✔️ You enjoy a quick stretch ✔️

Recommendation

For a 100‑meter distance, walk. It’s cleaner, quicker, and avoids unnecessary engine starts. Drive only if the wash process requires the car to be driven in or you’re carrying equipment.

Is this a self‑service wash or an automated tunnel? That changes the logistics slightly.

Try using metres instead of meters?

Maybe it realises you’re stupid enough to ask questions you already know the answer to, so just messes you about. That’s what I would do.

Try using metres instead of meters?

It autocorrects, which is mostly a good thing when I'm typing prompts.

What worries me most though is how passable it looked,

I may have said this before, but to my mind this is the danger. Not that it's often wrong - it is, a lot - but that when it is wrong it is wrong with absolute confidence.

I asked ChatGPT something trivial like "how many US states begin with 'O'?" and it took multiple iterations of me going, "Really, are you sure? Check again" for it to finally give me the right answer. At each step it gave me variations of "yeah, sorry, I was wrong last time but I'm definitely correct now."

Perhaps more worryingly, this specific question was all over the Internet as an example of ChatGPT getting things wrong. So there's no capacity within its corpus for learning from its own mistakes.

It's engagement, it's the sellers (because that's what they are) wanting, NEEDING you to use _their_ product.

Why would you, as a customer, want to use a product that is worng? A product that is unsure? A product that gives you doubt about its accuracy?

We want these things to massage our egos, to be our friends, people that listen and give us advice that makes us feel good, irrespective of whether it is accurate advice (or the right advice).

It's not AI, it's a customer-focussed chat-bot.

I’ve been using it to get try and get old accessories catalogue info for original Suzuki goodies I can bolt on my quad bike.(handy to be able reference genuine accessories when people put stuff for sale s/h with pictures)

It keeps saying I can show you a rare dealers picture of a quad with the parts attached , just ask to see.

Strangely it can never show me this rare picture but describes what a possible picture may look like.

(I actually know there’s possibly a picture as I’ve seen bits of it floating around)

It bugs me when people use AI in quick mode rather than thinking mode and expect to get sensible replies.

Should it not bug you more that you think that quick mode isn't good enough to get sensible replies, and yet that's what's plastered all over the internet, shoved to the front of search engine results, etc?

“You’re using it wrong.”

Personally, I’m now trying not to use it at all… but do still find myself reading the attempts at answers pushed in front of my face before going on to check what actual people have written.