It seems that the recent error in the COVID infection stats may be down to the fact that they used MS Excel to record the infections:

https://www.mirror.co.uk/news/politics/16000-coronavirus-tests-went-missing-22794820

I'm completely ignorant of how one should handle big important datasets and even I am surprised that they were using Excel for such a job. I don't know what kind of software they should have been using though.

Any of the nerds on here know what they should have been using instead?

My academic twitter is suggesting R.

But how usable that is for everyone in the chain is probably a limiting factor.

I reckon some modification of sap would have been ideal.

would be ready to work amazingly sometime in 2022

They should have used a relational database. if they have access to MS Excel then they'll have MS Access which is a very basic DB - but they'd have been better with something more professional and scalable such as MS SQL server or an equivalent, given the size of the datasets they're talking about (if they almost "lost" 16000 records in one day)

you can **** things up in any package.

some understanding of the limitations of the tools you are using would have been a start.

Cardboard index cards.

sap

Sold a pup?

For something this critical, I'd be recommending something industry strength like Mircosoft SQL Server or Oracle Database / MySQL.

I agree with Jambo - excel is a riotously poor choice

can't understand why they aren't using something like an AWS or Azure hosted SQL database because that would happily handle the scale, the availability etc that they need and can integrate with whatever analytical tools (including the AWS / Azure native ones). Of course they have half-arsedely chucked a load of data sources together and collected the cash.

From what I've read (tweets so could be a load of cobblers) the data is stored in a DB and it's the export to PHE where excel is involved.

So need some decent ETL software - FME for the win 🙂

If ever the words 'should have been a database' were applicable it is here

Edit:

From what I’ve read (tweets so could be a load of cobblers) the data is stored in a DB and it’s the export to PHE where excel is involved.

Ah, I feel better already. Still not sure just why excel would have been used but not that surprised

What software (not excel) should Serco have used

I don't believe Serco actually had anything to do with handling the test data.

My academic twitter is suggesting R

So a bunch of people claiming their tool is better than the other one without either knowing how they screwed Excel up (we know if was a max rows issue, but not where in the data pipeline that appeared etc), or understanding that their tool (R) is not a system for storing data, but is one for munging it after you've got it (e.g. by consuming a s/sheet!). They *might* have better stats from R but its entirely possible to loose data in R too; its also not impossible that the stats people are using R to consume the data and that adds to the lack of visibility of the missing rows.

Cardboard index cards.

BoJo's little black book?

Lotus 123 or Quattro Pro obvs 🤪

I very much doubt they are using Excel for anything other than ad-hoc reporting.

There'll be some sort of Serco branded AWS-powered serverless cloud thing that it'll be built in. I would hope.

Isn't the whole point that trying to have one centralised database for the entire country was a completely stupid idea in the first place?

All the experts were telling them that it should be devolved to regional health authorities who could then use their local expertise in this field. So which software they chose to use wouldn't be an issue as they wouldn't be trying to manage an enormous unwieldy nationwide system

But then we've had enough of experts in this country, haven't we?

And its working out really, really well

"we know if was a max rows issue, but not where in the data pipeline that appeared etc"

Twitter also suggests it was a max column issue, so each record was stored/sent as a column not a row!

Pretty much any modern relational database could handle that, it's not a huge amount of data. The main point would be to get someone competent to build it, manage it and of course do back ups.

You'd also need a front end, but again that's hardly rocket science for a simple data entry system.

Once you've got the data into a database you can then use other tools (such as R) to analyse it.

Edit: seems it might have already been in a database, and the problem was with the incompetant data extraction using an Excel.

Twitter also suggests it was a max column issue, so each record was stored/sent as a column not a row!

This is my understanding. They used columns for cases, which is extremely strange.

Isn’t the whole point that trying to have one centralised database for the entire country was a completely stupid idea in the first place?

No because it isnt. Having a centralised db to have the data fed into when it is something relatively simple like this makes sense. Means you dont need to mess around sending test results to the right location and also allows easy peeping next door.

Using regional vs centralised track and trace is a separate discussion. Either can plug into the centralised test results.

can’t understand why they aren’t using something like an AWS or Azure hosted SQL database

Squeamishness (justified or not) about putting what is basically medical records on the Amazon cloud?

Twitter also suggests it was a max column issue

This is the strange thing...max COLUMNS not rows, how is it laid out? What's in each column?!

I can very much imagine someone high up saying they want the data in Excel, but they want it at the most detailed level so they can do whatever analysis they want on it. Which leads to someone having to export it from SQL, and that export being used for the 'sitreps' to DH/ministers which causes the problem, but it seems to be something else as it's affected the actual tracing.

can’t understand why they aren’t using something like an AWS or Azure hosted SQL database

AWS RDS is pretty nice if you just want something that works without having to think about it. I imagine Azure is similar.

This is my understanding. They used columns for cases, which is extremely strange

I find it hard to imagine the data being stored like that but easier to imagine an interface file being asked for in that format

Squeamishness (justified or not) about putting what is basically medical records on the Amazon cloud?

Given their habit of handing data over to the big providers I cant see that kicking in now. Plus they are being used already for some fairly sensitive stuff (well them or Azure/Google not sure which got what).

I find it hard to imagine the data being stored like that but easier to imagine an interface file being asked for in that format

Doesnt really make sense for the sort of data. A wide file is fine when you have fixed columns but generally far easier to work with a tall file when that is uncertain (or indeed when there is a shedload of them).

You guys are funny.

Exactly what Bails says, you can have the most fancy cloud whizz bang system you can think of, the very, very first thing people will start doing is extracting data into Excel so they can make pivot tables.

However in this case it sounds like they are rolling up the data into a reporting system (not Excel) but haven't managed to integrate all the disparate sources, so are using excel extracts as the source for upload. And it will have been a super daft "oh yeah, I didn't realise that" error, like not clearing down what should be a temporary table. We'll never know unless they decide to tell.

This isn't particularly a failure of the technology, it's a failure of validation.

I'm wondering if the op is the IT manager for Serco ?

So a bunch of people claiming their tool is better than the other one without either knowing how they screwed Excel up (we know if was a max rows issue, but not where in the data pipeline that appeared etc), or understanding that their tool (R) is not a system for storing data, but is one for munging it after you’ve got it (e.g. by consuming a s/sheet!). They *might* have better stats from R but its entirely possible to loose data in R too; its also not impossible that the stats people are using R to consume the data and that adds to the lack of visibility of the missing rows.

I think the fact that they ran into a max rows (or cols) issue just paints a picture of an inept government department way out of their depth unable to handle large volumes of data. But yes there probably are legality issues with large cloud computing platforms and NHS data.

At least people that know how to use R probably have *some* experience of dealing with datasets.

Isn’t the whole point that trying to have one centralised database for the entire country was a completely stupid idea in the first place?

I wouldn't say so. Make "region" an additional category/variable within a centralised database and you can separate regions. In my experience its much easier to extract data from a bigger dataset, than making two semi dependent datasets into one big database.

to OP, Excel is not a bad tool, but it seems they hadn't taken into account its limitations. Which is a flop at a very basic level.

The way it works with data:

1. You have your interface to interact with the program/enter unprocessed data.

2. Unprocessed Data analysis and (processed and unprocessed) organisation.

3. Data storage(processed and unprocessed).

4. Data exposition/interrogation (for example, how many infected per region).

Excel can do all of the above in one package, however it does not excel at any of them.

My academic twitter is suggesting R.

R and excel are not mutually exclusive. Whatever you do with R, the data has to be stored somewhere, this is where they may have made the mistake.

Exactly what Bails says, you can have the most fancy cloud whizz bang system you can think of, the very, very first thing people will start doing is extracting data into Excel so they can make pivot tables.

100% this, I'll never understand the current circlejerk elitism towards some of the basic MS tools.

I very much doubt they are using Excel for anything other than ad-hoc reporting.

It appears that excel sheet fed the wider track and trace? Or have I got it wrong? Why else is it being reported that tracing potential contacts was delayed a week?

I think as TiRed pointed out, with just a few thousand being tested earlier in the year, PHE opted for a quick and dirty solution in excel and never got around to adopting a more appropriate solution as the scope grew.

I think the fact that they ran into a max rows (or cols) issue just paints a picture of an inept government department

Inept government department or an inept outsourced set of experts?

Dont forget the failure by PHE/NHS at the beginning with the lighthouse labs turned out to be Deloitte and mates.

Chances are with the tories ideology of handing money to their mates the actual PHE staff have had to hand it to some consultant just out of uni who only knows powerpoint and excel.

And it will have been a super daft “oh yeah, I didn’t realise that” error, like not clearing down what should be a temporary table. We’ll never know unless they decide to tell.

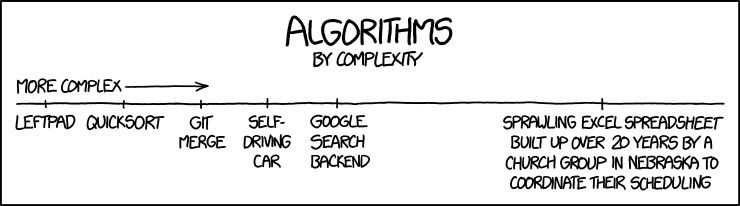

The sheet ran out of rows is what happened and data that was supposed to be appended failed to be appended and nobody noticed for several days. This was spun as an IT error but it seems someone didn't understand that excel has a row limit. As someone who used excel before Office 2007, I was accurately aware of this because it used to be about 65 k max rows but was changed to 1 million rows. If you didn't use excel before Office 2007 you may never have run into this hard limitation.

Inept government department or an inept outsourced set of experts?

I suspect it's not either / or.

Maybe they didn't want to buy licences for the database to cover all users so extracted to Excel for sending to Track & Trace? Where I worked for 20 years we typed out timesheets into Excel and somebody retyped them into SAP.

A colleague who was ex-Royal Engineers ('sappers') reckoned his RE dictionary defined SAP as "To destroy by undermining"

This is quite a good explanation from the Guardian. It seems that some labs were sending in their results as a .csv to PHE who would import that data into excel. A .csv doesn't have a row limit but an excel sheet does, ergo any rows over a million will simply be culled during the import, and this wasn't spotted initially.

Presumably, the labs were just exporting all their tests to date each day into the .csv too. It all sounds terribly unsophisticated with a lot of redundant data handling and everything hinging on a dodgy excel sheet.

A non scalable system? IT delivered on the cheap (but part of an expensive government contact)? No one should be surprised.

.csv is a pretty decent way of exporting raw data, and easy to import into a db, as long as they are delivered consistantly, even if they aren't then there are tools that aren't excel that can edit them really, really, easily to put them into the format you need to import them into a proper database system, like MSSQL or ORACLE, which is exactly the kind of thing PHE should have been using from the start.

It's also possible that they were importing into Excel 2003 which has the much smaller row limit.

This made me laugh a lot:

I’m wondering if the op is the IT manager for Serco ?

Thanks for all the input folks. I had a suspicion that the view on my social media that 'EXCEL IS BAD' was misguided, even if its limits may have contributed towards this error.

I heard they were using 32bit excel so it just broke when they tried to exceed the number of available cells.

When watching the news on Saturday with Wife I said I bet this 'technical issue' is something really stupid like an excel spreadsheet running out of rows, so it was funny this morning when she shared this article with me.

The bottom line is the problem is with the people, not the technology, better people would put a better system in place or at least have the wherewithal to do a sanity check.

It wouldn't surprise me if they were using an xls file which has a 65,536 row limit, rather than xlsx which has 1,048,576 limit mentioned in the Guardian.

I guess there were three issues:

1) Involving manual processes like emailing around CSVs and manually compiling into database - rather than having automated and auditable processes.

2) Spreadsheet application being used to database, rather than a database application

3) Stupidity

Given the profile/level of investment and >6mths this has been going on for I wouldn't have thought it unreasonable for some kind of controlled system to be put in place e.g. with SQL database backend and frontends to interface with various users i.e. test centre upload results and enter summary information, administrators generate reports, test and tracers generate and manage cases etc.

Thanks for all the input folks. I had a suspicion that the view on my social media that ‘EXCEL IS BAD’ was misguided

The main things about Excel are:

Its on most peoples work machine.

Most people have some vague familiarity with it.

It is pretty capable especially when you throw VBA into the mix.

It is relatively easy to do a quick prototype in.

So when given a choice about gathering some proper requirements and then standing up a DB with a front end on it Excel often wins out as a shadow IT tool.

Given the profile/level of investment and >6mths this has been going on for I wouldn’t have thought it unreasonable for some kind of controlled system to be put in place e.g. with SQL database backend and frontends to interface with various users i.e. test centre upload results and enter summary information, administrators generate reports, test and tracers generate and manage cases etc.

I'm no IT expert but haven't there been a lot of problems rolling out such systems in the public sector?

I know for a fact that fire rescue has no central/national database and neither does town planning. All their data resides at the local level on a hotchpotch of paper and electronic records. It also make FOI requests problematic because they can claim compiling the data imposes an unreasonable cost.

That Guardian link doesn't make a whole lot of sense, difficult to say where the stupidity was introduced...

I can understand why it's still using a cobbled-together system relying on CSVs and some sort of fudged back-end though, government IT stuff just doesn't work at the sort of pace required for this sort of thing (outside of some specialist departments but I guess they weren't engaged). If Serco created the process and back-end then they'd still likely have been tied into a formal proposal, budget, design and change implementation approval process, that can take months even for relatively small projects (I work for an external IT provider to a government agency, not Serco though :p ).

It wouldn’t surprise me if they were using an xls file which has a 65,536 row limit, rather than xlsx which has 1,048,576 limit mentioned in the Guardian.

... and a 255 column limit IIRC. If they were outputting data in a cross tab-esque format, one day per column and started the spreadsheet back on Jan 23rd it would have shat itself two days ago.

Not unusual to dump data to the old format either.

There are default choices in the IT world. Data goes in a database, like MySQL, Oracle, MS SQL or IBM DB2. Unless there is a special requirement to do something else, use one of these.

Given the profile/level of investment and >6mths this has been going on for I wouldn’t have thought it unreasonable for some kind of controlled system to be put in place e.g. with SQL database backend and frontends

I’m no IT expert but haven’t there been a lot of problems rolling out such systems in the public sector?

There have, but this is not because of the technology. It's because of the way that govt departments approach IT, and the way IT suppliers approach govt departments. The govt depts say 'You are going to give us exactly this' and the IT suppliers say 'ok! No problem!' then they realise that 'this ' is full of holes, and they either plough on regardless saying 'well that's what you asked for' or 'but this isn't going to work' and the dept says 'don't try and get smart with us that's what we want' etc etc. It's a complete balls up, usually. Sometimes the supplier's fault, sometimes the dept's, sometimes both, sometimes the issue is external. Sometimes the supplier's salespeople say 'yes' to everything then the techies say 'but you haven't thought of this and that' and then it all falls to bits. See the youtube video 'The Expert' for a very very accurate example of this.