Forum Replies Created

-

Jamis Dakar A2 review

-

shintonFree MemberPosted 5 years ago

I saw that article nn and also a video comparison

I must say I’m not keen on the second hand or the minute hand on the smaller 013.

On the 007 I picked up today it’s a J model and has Made in Japan written between the 7 and 6. I started looking into the J versus K model but gave up as there was so much conflicting information.

shintonFree MemberPosted 5 years ago@rene59 good steer on the Seiko SKX007 with the rubber bracelet. I picked one up overnight at Dubai airport for approx £190. Dual Arabic/English day function.

shintonFree MemberPosted 5 years agoNow that we’re ‘empty nesters’ all extra money is going into AVCs and I don’t expect to have the mortgage paid off before I retire. When that time comes we can either use the 25% tax free lump sum to pay off the mortgage balance or downsize and be mortgage free. If we go for the first option we can still downsize at a later date.

shintonFree MemberPosted 5 years agoIsn’t the house this one that was on Grand Designs – https://www.theguardian.com/lifeandstyle/2009/jan/03/house-architecure-highgate-cemetery

shintonFree MemberPosted 5 years agoI’m planning on going in 2020 for a big birthday treat. Possibly looking at renting a camper van for a week and taking in the French and Belgian WW1 memorials.

shintonFree MemberPosted 5 years agoAdam Buxton from the Adam and Joe Show is very good and has some great guests.

shintonFree MemberPosted 5 years ago@johnners you can use apps like Google Authenticator instead of text for 2 factor authentication. Just go into your security settings and you switch it on via the same page as the text verification.

shintonFree MemberPosted 5 years agoMy advice would be to find a good local mortgage broker and use them.

shintonFree MemberPosted 5 years agoRe Bill’s Mother’s – I remember Bumble explaining the origin to Athers who had gone through his entire playing career hearing it without knowing where it came from

shintonFree MemberPosted 5 years agoBlack Friday special at Eurosport.

Annual subscription for £19.99 available until 26th November.

Usual price is £39.99 although it is often on offer throughout the year.Last year it was a measly £1.99 on Black Friday so might be worth holding out to see if there’s a better offer given this price is valid until 26th

shintonFree MemberPosted 5 years ago^^^^^^

After a recent blood test I was told I had too much iron in my blood. Currently being tested for hemochromatosis and if positive I will have to give blood every couple of weeks to reduce the iron, which is not great as I hate needles

shintonFree MemberPosted 5 years agoGreat delivery and refunds, but still a little piece of me dies inside when I hit the 1 Click button.

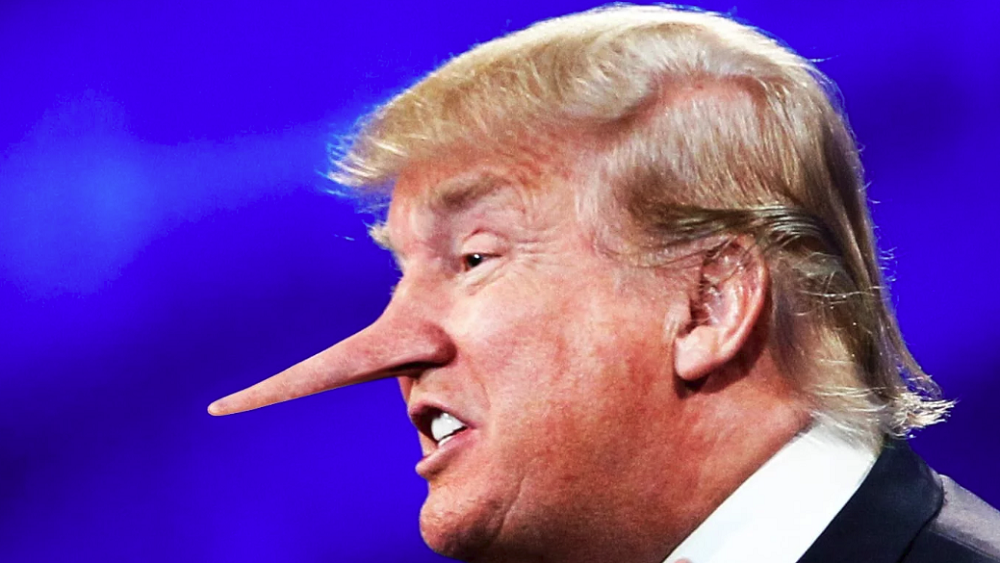

shintonFree MemberPosted 5 years agoHaving recently seen this image I see it every time this chump opens his mouth

shintonFree MemberPosted 5 years ago

shintonFree MemberPosted 5 years agopoolman sums it up nicely, essentially it all comes down to your attitude to risk. If I was offered 35x I would be very tempted, but only if that was part of my overall pension provision.

shintonFree MemberPosted 5 years agoMrs S has a Disco Sport and it’s had the DPF problem due to only doing shortish runs and also badly corroded disks which JLR have now moved the goalposts on warranty to say that its a “cosmetic” problem. She’s had proper Discos previously and I do prefer them.

shintonFree MemberPosted 5 years agoSpeed limit changes from National to 40mph about 1/2 mile before the pub so she’s bang to rights. Fairly recent change, but I guess its to stop cars going up someones trumpet while they are waiting to turn right at the pub junction

shintonFree MemberPosted 5 years ago+1 for a taxi journey and use MyTaxi app to keep the price down. Also pack a water bottle as their are loads of drinking fountains to top up from.

shintonFree MemberPosted 6 years agoI watched Icarus last night on Netflix and just saw this story break about Beckie Scott who was in one scene – https://www.bbc.co.uk/sport/45840481

Icarus won an Oscar for best documentary so well worth a watch.

shintonFree MemberPosted 6 years agoMadeira? Fireworks are better than Sydney IMHO and you can get some good riding in as well.

shintonFree MemberPosted 6 years agoIf you use OneNote just write your notes the normal way, scan with Office Lens phone app and sync with OneNote. Zero cost solution.

shintonFree MemberPosted 6 years ago+1 for the SSD. I did this about 4 years ago and it made a huge improvement. My BIOS date is 26/12/2007 and I’m running an Intel quad core processor also released in 2007 with 3GB RAM, Windows 10 and I’m finally looking to replace it with another Windows PC at Black Friday.

Also have a 2017 MacBook Pro for work, iPad, iPhone and iWatch which are all great but I can’t justify £1,749 for a 27″ iMac

shintonFree MemberPosted 6 years agoNo, but my model above comes down to £7.50 if you select ‘Needs Repair’. Mine was starting to get quite slow on charging and used to crash a couple of times a week, but otherwise in good condition for 6 years old.

shintonFree MemberPosted 6 years agoThere may be a problem down the line if things start to get tough financially for the company. You will be on a higher salary than others doing the same role, and that is a key factor when HR decide who to ‘cull’.

shintonFree MemberPosted 6 years agoListening to TMS, preferably during our summer. All seems right in the world.

shintonFree MemberPosted 6 years agoRather than make overpayments on the mortgage you may want to consider putting that amount into a stocks and shares ISA or pension AVCs. All depends on your attitude to risk, tax situation, age, etc.

shintonFree MemberPosted 6 years agoI had a mechanical today so had to get fixed up at the bike shop. Plenty of ebikes for hire in the shop and the mechanic took a couple of calls inquiring about ebikes while I was waiting.

Top service from the bike shop BTW

shintonFree MemberPosted 6 years agoDid a 20 mile Lakes ride in May 2014 and repeated the same ride today. Got a puncture in the same place both rides and ended up fixing it on the same piece of grass. Anyone else had a puncture on the small climb after the gate as you leave Grizedale and get to the ridgeline overlooking Conniston?

shintonFree MemberPosted 6 years agoThe 2 pint limit that is banded around is something that I grew up with in the late 70’s. Whether that was a way for the police to give advice on what the limit was I don’t know, but what you have to remember is that drinking and driving was a massive problem and the law was only introduced in 1967. Pretty much everyone I knocked around with stuck to 2 pints if they were driving, with the odd exception who would have a couple more. In fact I don’t remember many people at all saying they wouldn’t have a drink if they were driving.

I was stopped one Bank Holiday lunchtime after drinking 2 pints of Pedigree and passed the breathalyser, and I don’t know anyone who has failed on just having 2 pints.

These days I’m not confident I could pass having had 2 pints, so only have 1 if I’m driving.

shintonFree MemberPosted 6 years agoDomestique by Charly Wegelius is a good read if you want some insight into the day to day life in the peleton. Also didn’t realise the incident at the world champs. No more spoilers.

shintonFree MemberPosted 6 years agoMoving from milestone based maintenance to condition based maintenance is a massive cost saving, and IOT/Digital twin, yada… is an enabler.

To Mike’s point about petabytes of data it doesn’t have to be that way. You have the choice to keep that data, and if you keep it on S3 or even Glacier on AWS, or Blob storage on Azure it should be relatively cheap. But 99% of data from an IOT device is going to be in an acceptable range so you can actually build a case for only keeping the data that matters.

And there will only be a small number of use cases where you can get into Petabytes. I just looked at my garmin and a 3 hour ride has a file of 116k, so I would need to do 8,620,689,655 of those rides to fill up a Petabyte.

AWS and Azure will mop up most of the infrastructure behind this as it can be a real hassle to worry out device management, security etc. Google have some pretty cool stuff around AI and analytics but you can run Tensorflow (great product) on AWS anyway so why bother with Google Cloud Platform.shintonFree MemberPosted 6 years agoThe price point of IOT devices is so low you can pretty much put them on anything:

The Dairy Monitor solution of Connecterra (www.connecterra.io) uses high-tech pedometers mounted on cows to very accurately sense its movements (Pretz, 2016). The solution is scalable and directly connected to a cloud services platform with advanced algorithms for predictive analytics. Connecterra actually creates Digital Twins of cows that are used to remotely monitor cows and to detect when a cow is in estrus (in heat) and to monitor its health. The Dairy Activity Monitor is able to provide multiple behaviour detection and predictions including animal heat & estrus cycles, health analysis and also provide a forward looking prediction of the next cycle start dates. The devices learn and tune their behaviour based on the individual movements of cattle. Furthermore, Connecterra provides location services that track and trace the movements of dairy cows giving you an accurate measurement of the free-grazing time per animal.

shintonFree MemberPosted 6 years agoYep, the Bet365 offers have been excellent but I only get the £25 offer and not the £50. Still, FA Cup Final, Champions League, Oaks and Derby free bets have been generous.

As you say I just need to place a few more normal bets so I don’t get gubbed by the bookies. Signed up with oddsmonkey which makes things really quick for picking the right bets.

shintonFree MemberPosted 6 years agoApologies for starting another thread, I’ve given up on the Search function. The scheme I’m in is Barclays and people are transferring out in their hundreds due to the good transfer values. Also, Barclays have decided to move the pension scheme out of the Retail arm and into the ‘Casino’ arm which has me worried long term although it will be protected to some extent if it goes pop

shintonFree MemberPosted 6 years ago1 mile of quiet road to the top of the skills area at Delamere. Not that I have any skills.

shintonFree MemberPosted 6 years agoNot as ugly as its big brother, and I can get my 650b+ in without the wheels off. But it is a big car:

The F34 is based on the F35 chassis used by the Chinese market long wheelbase sedan.<sup id=”cite_ref-2″ class=”reference”>[2]</sup> It has a wheelbase that is 110 mm (4.3 in) longer than the F31 3-series estate. The roof is 79 mm (3.1 in) higher than the F31 and the width is increased by 17 mm (0.67 in)

shintonFree MemberPosted 6 years agoRecently bought my first car with a heated steering wheel, and for someone like me with poor circulation I’m never going to be without one in the future.

@Kryton57 – how about a 3 series GT as long as you haven’t got a dog? Bigger boot than the touring with and without the seats down, and a much smoother ride.